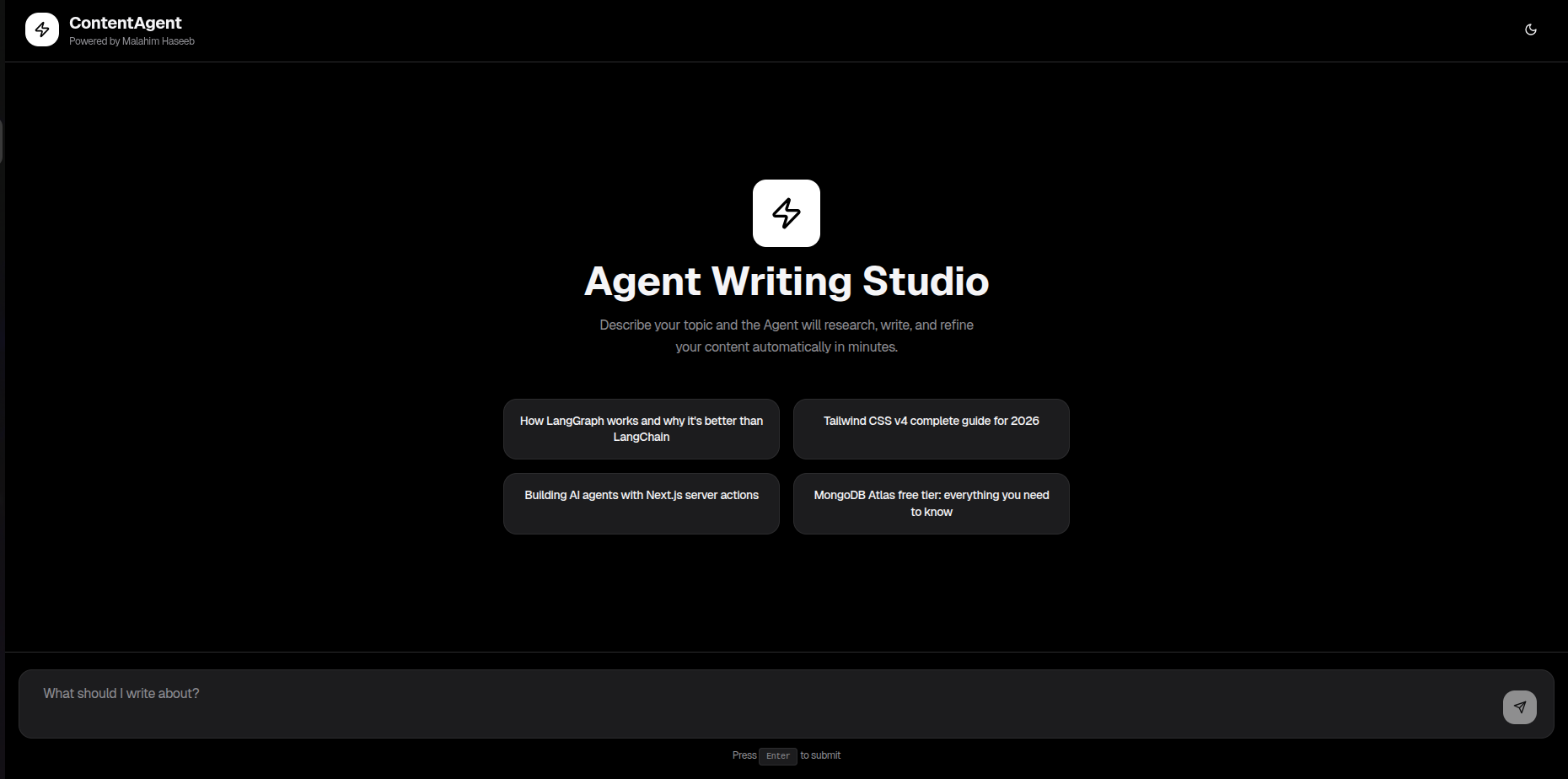

Contentagent — I Built a Multi-Agent Blog Writer in a Day

Yesterday I sat down with a rough idea: what if an AI could handle the entire blog writing pipeline autonomously? Research, draft, self-critique, format — all without a human in the loop. By end of day, contentagent was live. Here's how it works, what I built it with, and what I learned.

The Idea

Most AI writing tools are wrappers — you prompt, it outputs. I wanted something different: an agent that decides its own next step. Does this topic need web research? Should the draft be revised? The agent figures that out, not you.

The result is a five-node LangGraph pipeline where each node is a specialist. The output isn't just text — it includes extracted metadata: title, description, tags, and reading time.

How It Works

Every blog request flows through a fixed graph. Here's what each node actually does:

Agent Workflow

Router

Analyzes the topic and decides whether web research is actually needed. Static topics skip search entirely — saving time and API credits.

Search

Runs dual Tavily API queries for broader coverage. Pulls fresh, real-world data and passes it as context to the writer.

Writer

Google Gemini generates a full, structured blog post using the search context (or its own knowledge if search was skipped).

Critic

A second LLM pass reviews the draft for quality, clarity, and accuracy — and rewrites weak sections. This is the node that actually moves the output quality needle.

Formatter

Cleans Markdown, enforces structure, and extracts metadata — title, description, tags, and estimated reading time.

START → Router → [Search?] → Writer → Critic → Formatter → END

The Stack

The Next.js product and the open-source FastAPI backend share the same agent architecture — different stacks, same five-node pipeline.

Next.js Product

| Layer | Tech |

|---|---|

| Agent Orchestration | LangGraph + LangChain |

| LLM | Google Gemini (@langchain/google-genai) |

| Web Research | Tavily API |

| Frontend | Next.js 16 + React 19 + TypeScript |

| UI | shadcn/ui + Tailwind CSS + Radix UI |

| Deployment | Vercel |

FastAPI Backend (Open Source)

| Layer | Tech |

|---|---|

| Agent Orchestration | LangGraph + LangChain |

| LLM | Google Gemini (@langchain/google-genai) |

| Web Research | Tavily API |

| Backend Framework | FastAPI + Python 3.10+ |

| Validation | Pydantic V2 |

| HTTP Client | httpx (async) |

What I Actually Learned

The Critic node is the biggest quality lever. Writer alone produces decent output. Writer + Critic produces something that actually reads well. That single extra LLM pass is worth the latency cost every time.

Agent state design is the real architecture challenge. The LangGraph graph definition is maybe 40 lines. The hard part was making sure each node received exactly what it needed — no more, no less — through the shared state object.

Conditional routing is LangGraph's killer feature. The Router node deciding whether to call Search or skip straight to Writer — that conditional edge is what makes this feel like an agent rather than a chain. It's a small thing that changes everything about how you think about building these systems.

CTA

The full Next.js product is live at contentagent.malahim.dev. If you want to explore the agent architecture yourself, I've also open-sourced a clean FastAPI + Python implementation of the same five-node pipeline — same pattern, different stack, no frontend overhead.

git clone https://github.com/MalahimHaseeb/agent-writer-fastapi.git

cd agent-writer-fastapi

python3 -m venv venv && source venv/bin/activate

pip install -r requirements.txt

# Add to .env:

# GEMINI_API_KEY=your_key

# TAVILY_API_KEY=your_key

python main.py